Over the last year we have seen a new oddity in the cloudosphere: cloud drop outs that have forsaken the cloud for the financial predictability of physical infrastructure. At the same time, we have seen other companies touting the compelling economic benefits they derive from the cloud. How is it these two disparate points of view can simultaneously co-exist?

Comparing Cloud vs. Physical

In an attempt to answer the question, we have done a cost comparison of a fictitious application consisting of 15 web servers, 25 application servers, 4 database servers and 50 TB of storage. I priced the physical hardware using all Dell equipment (PowerEdge rack servers and EqualLogics storage array), and priced the cloud using Amazon Web Services (EC2, EBS).

Here is a comparison of the costs of this application deployed with physical infrastructure versus the cloud:

[table id=9/]

As you can see, the cloud is 22% less expensive than physical infrastructure. A compelling cost advantage, right? Well, not so fast. Let’s extend this analysis into years 2 and 3, where we realize the benefits of the one-time capital expenditure for physical hardware:

[table id=10/]

The result is that over 3 years, we actually spend $224,000 more (+40%) to deploy and operate this application in the cloud. This leads to the obvious question: if the cloud is more expensive than physical infrastructure, why would anyone be moving to the cloud?

Elasticity & Utilization

The problem with this cost comparison is that it does not take into account the primary advantage of the cloud: elasticity. The presumption above is that we have deployed infrastructure in year 1 to support an application that achieves near complete utilization of the underlying hardware infrastructure through year 3. In other words, if we provisioned a 50TB SAN, the assumption is that we were able to utilize all 50TB each year. What if we only utilized 10TB in year 1, or 25TB in year 2?

The average application designed for the cloud has a higher utilization of resources due to the ease of provisioning/de-provisioning, and the behavior influence of consumption-based pricing. Inefficient usage of physical infrastructure is often driven by the lead time to order and deploy hardware. Some common examples include:

- Incorrect growth/usage projections

- Building for peak utilization

- Building ahead of growth

- Building for temporary utilization

When Cloud Is Cost Effective

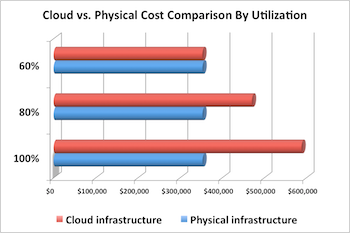

Below is a three year model of our application at different utilizations: 100%, 80% and 60%.

[table id=11/]

As you can see, the cost advantage of physical infrastructure disappears when this hardware is operated at 60% or less efficiency.

A simple method to calculate the utilization of your infrastructure is to assign a percentage to each device based on the usage it will have in the specified period. So if your 50TB SAN is using 25TB, you are running at 50% utilization. If you have designed your web server infrastructure for a peak usage that is 25% above average for 36 days out of the year, your average utilization will be 77%.

Conclusions

How is it we have organizations winning and losing on the economics of the cloud? The answer is that some organizations have use cases that make effective usage of the cloud, and some do not. Use cases that if implemented with physical infrastructure would achieve 60-70% or better utilization, will likely not be cost-effective today. So before you move that internal IT application to a public cloud, you should ask yourself: is this a cost-effective decision?

I’d be happy to share the spreadsheet that produced these models. An inherent assumption here is that the software will be as effective (or ineffective) in utilizing the underlying infrastructure in the cloud or with physical hardware. This raises another cost advantage of the cloud we can discuss in a future post: architecting for the cloud.

For additional reading, checkout Sonian CTO, Greg Arnette's post: Gaming the Cloud: Start With the Right "Cloud" Use Case.